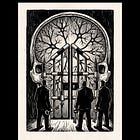

The Cognitive Commons: Everything from Everyone, Everywhere, All the Time

The training corpus is the new commons. The model weights are the new enclosure. The dispossessed are everyone.

In the first decades of the nineteenth century, the British Parliament passed roughly four thousand local acts of enclosure. The titles were uniform: An Act for dividing, allotting and inclosing the Open and Common Fields, Common Meadows, Common Pastures, and other Commonable Lands and Waste Grounds, within the Parish of , in the County of . The preamble in each was nearly identical. The lands were declared to lie “intermixed and dispersed in small parcels” and to be, in their present state, “incapable of considerable improvement.” The act authorized commissioners to divide the commons among the freeholders. The commoners were not parties to the act. They were the laborers, cottagers, and smallholders who had grazed cattle, gathered fuel, cut peat, snared rabbits, and otherwise drawn their subsistence from the commons for unrecorded centuries. They were extinguished as a class of users by operation of law. They became wage laborers, paupers, vagrants, or emigrants.

By the middle of the nineteenth century the parliamentary enclosures had transferred approximately six million acres of common land in England into private ownership. The word “improvement,” repeated in the preamble of every act, was the operative euphemism. When the laborers of Otmoor in Oxfordshire rose in armed resistance to their own enclosure act in 1830, the leaders were arrested and transported to penal colonies. The historical term for the operation is primitive accumulation. Marx described it in the eighth section of the first volume of Capital as the act by which the producer is forcibly separated from the means of production. Silvia Federici, in Caliban and the Witch, would later show that the operation was never confined to one period and was never simply a matter of land. It was, and is, the constitutive operation of capitalism, executed continuously, against new commons, as the system requires new sources of unpaid raw material.

On July 19, 2011, the United States Attorney for the District of Massachusetts, Carmen Ortiz, returned a federal indictment against Aaron Swartz, twenty-four years old, charging him with wire fraud, computer fraud, unlawfully obtaining information from a protected computer, and unlawfully damaging a protected computer. The conduct alleged was that Swartz had connected a laptop to the MIT network and downloaded approximately four point eight million academic articles from the JSTOR repository, with the intent, the prosecution alleged, of making them available outside the paywall.

JSTOR had declined to pursue charges. MIT had declined to take a position. The Department of Justice proceeded anyway. A superseding indictment in September 2012 expanded the charges to thirteen federal felony counts carrying a maximum sentence of thirty-five years in prison and a million dollars in fines. The prosecution offered a plea deal contingent on a felony conviction. Swartz, facing the choice between a permanent federal record and the resources of the United States government deployed against him at trial, hanged himself in his Brooklyn apartment on January 11, 2013. He was twenty-six. The lead prosecutor remained in office. The case was dropped after his death.

On October 16, 2015, the United States Court of Appeals for the Second Circuit, with Judge Pierre Leval writing for a unanimous panel, ruled that Google had committed no copyright violation when it scanned, without permission, the entire contents of approximately twenty-five million books drawn from the libraries of Harvard, Stanford, the University of Michigan, the University of California, the New York Public Library, and Oxford. The opinion held that the scanning was sufficiently transformative, in that the resulting product, a searchable database providing snippets of text, served a different function than the underlying books, and that the operation therefore qualified as fair use under section 107 of the Copyright Act. The Authors Guild’s petition for certiorari was denied by the Supreme Court on April 18, 2016.

The ruling thereby became the legal foundation on which the entire subsequent regime of artificial intelligence training-data law would be constructed. Every fair-use defense in every current AI lawsuit traces its lineage to this opinion. Every model trained on text its developers did not own, every image generator trained on photographs its developers did not license, every code-completion system trained on code whose authors did not consent, rests on the doctrine that Google established in 2015. Twenty-five million books, scanned without permission, ruled lawful. Four point eight million academic articles, downloaded without permission, prosecuted to a young man’s death. The same act, with opposite consequence. The variable, in each case, is who benefits.

This is the operation Federici described, conducted across four centuries on three different commons: the land, the scholarly archive, the book. It is the same operation, performed continuously by the same class apparatus, against whatever commons can be located and converted into private property. The legal-political infrastructure does not adjudicate the operation neutrally. The parliamentary act, the federal indictment, the appellate ruling: each determines who is permitted to enclose. The commoner who attempts to dis-enclose is criminalized. The corporation that performs a far larger enclosure is authorized. This is the function of property law under capitalism, and it has not changed since the Tudor depopulation acts.

What has changed is the commons. We are deep into the contemporary phase of the operation, conducted now against a new commons, the cognitive commons, and that contemporary phase has, within the past eighteen months, become legible at scale to the population it is dispossessing. Contemporary scholarship has begun to describe what is happening: Nick Couldry and Ulises Mejias on data colonialism, McKenzie Wark on the vectoralist class, Kate Crawford on artificial intelligence as planetary extraction, Cédric Durand on techno-feudalism, Aaron Benanav on automation and surplus populations, Shoshana Zuboff on surveillance capitalism. Each of these terminologies captures a fragment of the operation. None has yet diagnosed it as a single operation, continuous with the seventeenth-century original, conducted by the same class against a new commons, and assembling the same property relations as its outcome. The analytical apparatus required to understand it that way has been on the shelf, in Caliban and the Witch, since 2004. This essay applies it.

In March 2025 the journalist Alex Reisner published in The Atlantic a searchable database tool that allowed any author to enter their name into a single field and learn, immediately, whether their books had been ingested into Library Genesis, the pirate scholarly archive that Meta had used to train its Llama family of models. The article accompanying the tool documented the contents of LibGen as approximately seven and a half million books and eighty million academic papers, the largest known unauthorized archive of scholarly material in existence. The unsealed court filings in Kadrey v. Meta documented how that archive had been put to commercial use.

Within hours of the tool’s publication, writers searched their own names: published novelists, university professors, poets, journalists, working academics, the entire literate professional class that has carried what remains of an Atlantic readership through the late period of print. The archive returned hits. Ten titles. Forty titles. Entire bibliographies. The work of decades, ingested without permission, processed into a corporate training corpus, used to assemble the model weights now generating revenue for a class to which the writers did not belong and would never belong. The phenomenology of the moment was documented in the writers’ own posts: grief, in some cases; rage, in others; resignation, in many; and beneath all of the affect, the specific cognitive event of recognition. The work I labored over. The work I cannot recover. The work that is now training the system that will replace me.

The unsealed Kadrey filings rendered the operation visible in the second register that the parliamentary enclosure acts also possessed: the act on its own administrative record. Internal Meta correspondence, surfaced in discovery, showed that the company’s engineers and lawyers had weighed the legal exposure of using a pirated corpus and had proceeded. The decision was reportedly approved at the most senior level of the company, with full awareness that the conduct was actionable. Internal communications expressed reservations. Torrenting copyrighted material from corporate infrastructure, one Meta employee reportedly objected, did not feel right. The reservations were noted and overridden. The corpus was ingested. Llama was trained. The model was released.

The legal exposure was managed downstream as a cost of doing business: Kadrey v. Meta, Bartz v. Anthropic, New York Times v. OpenAI, the entire field of training-data class actions now consolidated as a regular line item on the AI industry’s financial statements. The class that performed the enclosure had calculated that the cost of the litigation would be smaller than the value of the corpus. The calculation was correct. The corpus is now the basis of the most heavily capitalized industry of the present moment. The litigation continues. The operation is irreversible.

This is what was rendered visible in March 2025. A global commons of human cognitive labor was being rolled up, processed, and converted into the privately held weights of a small number of corporate models. The corpus comprised every book scanned by Google, every academic paper sequestered behind paywalls and then liberated in shadow archives, every line of open-source code, every photograph posted to a public platform, every recorded utterance, every keystroke captured by the surveillance-capitalist data layer over the last twenty-five years, every search query, every email, every patient note, every legal filing, every piece of writing produced by every literate human being living and dead. The dispossessed population was given no consent, no compensation, and no representation in the process.

The asymmetry between the source population and the appropriating class is the most extreme in the recorded history of primitive accumulation. The commons that Federici described had its commoners. The peat-cutters of Otmoor had names. The cognitive commons has no concentrated population to defend it because its source is everyone. Everything from everyone, everywhere, all the time. This essay takes the asymmetry as its subject, and will argue that it is its political opportunity.

I.

The cognitive commons is the accumulated record of what human beings have thought, written, drawn, recorded, and spoken to one another since the invention of writing. It includes every book, every academic paper, every photograph, every line of code committed to a public repository, every voice recording, every legal filing, every patient note, every email, every social-media post, every search query, every keystroke captured by surveillance capitalism over the last twenty-five years. It includes the texts of every dead language and every living one. It includes the songs of indigenous communities that signed no licensing agreements. It includes the open-source code that the Free Software Foundation built, and that the developer who wrote the GNU Public License could not have imagined would be used to train the systems that would replace him. It includes, in principle, the entire output of human cognitive labor since literacy began.

This is the commons that was, until approximately 2018, held in something that resembled a commons. Not a literal commons in the legal sense. The components of the archive were variously copyrighted, public-domain, paywalled, restricted, or freely available, in a complicated and historically determined set of arrangements. But the archive as a whole had no single owner. No one entity held property rights in the totality of human written, spoken, and recorded production. Capital had not yet figured out how to convert it into property.

The conversion has now occurred. The mechanism by which it occurred has the same structure that Federici described, conducted across the sixteenth century, against the land commons of England, France, Germany, and the rest of pre-capitalist Europe. The operation works in three steps. First, the existing commons is identified, mapped, and inventoried. Second, the commons is enclosed by some combination of legal-political force and unilateral capitalist action, with the dispossessed having no voice in the proceeding. Third, the legal-political apparatus retroactively legitimizes the enclosure, criminalizing any subsequent attempt at restoration, and declaring the enclosure to be “improvement” of an underutilized resource. The seventeenth-century parliamentary acts, Aaron Swartz’s prosecution, and the Authors Guild fair-use ruling are the three steps as performed against the cognitive commons.

Inventory of the cognitive commons proceeded through a sequence of supposedly nonprofit, supposedly public-interest projects that in retrospect read as the surveying expeditions of the enclosure that followed. Common Crawl, incorporated in 2008 as a 501(c)(3) charity by Gil Elbaz, declared its purpose as the maintenance of an “open repository of web crawl data” for research. Seventeen years later, Common Crawl is the largest single corpus on which the major frontier models are trained. The 2020 release of the Pile by the open-source collective EleutherAI bundled Common Crawl with the Books3 archive, Wikipedia, GitHub, ArXiv, the OpenWebText scrape of Reddit, and a dozen other corpora into a single eight-hundred-gigabyte training package. The 2022 release of LAION-5B by Christoph Schuhmann’s German nonprofit paired five point eight five billion images scraped from the open web with their accompanying text, providing the substrate on which Stable Diffusion and every subsequent open-weights image generator was trained. Each of these projects presented itself as a public good. Each was consumed, in short order, by the for-profit AI laboratories whose training corpora they became.

Enclosure followed. OpenAI trained GPT-2 on WebText in 2019, GPT-3 on Common Crawl plus WebText2 plus Books1 and Books2 in 2020, and the GPT-4 series on a corpus the company has refused to disclose. Anthropic trained the Claude family on a corpus the company has refused to disclose. Meta trained Llama on Common Crawl, GitHub, ArXiv, and the Books3 archive, the unauthorized origin of which was visible to anyone reading the Pile documentation, and trained Llama 2 and Llama 3 on the LibGen pirate library after internal correspondence had flagged the legal exposure and senior leadership had overridden it. Google trained the Gemini family on its proprietary search index, every email passing through Gmail, every video uploaded to YouTube, every Street View capture, every map navigation, every Google Drive document, every Google Doc in which the user had not affirmatively opted out. xAI trained Grok on the X corpus that Elon Musk had acquired the right to in his forty-four-billion-dollar acquisition of the platform. Amazon trained its Titan models on the AWS substrate, Apple on its iOS telemetry, Microsoft on its enterprise document corpus.

In February 2024, Google signed an agreement with Reddit licensing the platform’s entire user-generated archive for sixty million dollars. The corpus thus enclosed had been produced by approximately seventy million Reddit users over twenty years, none of whom were consulted, none of whom were compensated, none of whom had the legal standing to object. The terms of service to which they had clicked through, in years past, were retroactively interpreted as having transferred the rights to Reddit. Reddit then transferred the rights to Google. The users discovered the transaction by reading about it in the technology press.

This is data colonialism, in the apparatus that Nick Couldry and Ulises Mejias developed in The Costs of Connection. The colonial parallel is exact. The colonized population is the global producing class of digital communication, which is essentially everyone with a smartphone. The colonizing power is the small group of corporations that has assembled the technical, legal, and capital infrastructure to convert the producing class’s output into property. The colonial extraction is the data. The colonial product is the model. The colonial subject is the user, who is told that the relationship is mutually beneficial and that the alternative would be to live without the services that the colonizing power has made indispensable.

McKenzie Wark, writing earlier and from a different angle, described the same class as the vectoralist class. The vectoralists are the owners of the vector of data flow, the platforms and protocols and pipelines through which information moves. The vectoralist class extracts rent from every transaction conducted on its infrastructure. It does not produce the content. It produces the conditions under which the content can be exchanged, and it owns those conditions. Wark’s analysis, published in A Hacker Manifesto in 2004 and developed in Capital is Dead in 2019, anticipated the AI moment by twenty years. The model laboratories are the consummation of the vectoralist project. They own not only the conditions of information exchange but the engines that now generate the information itself.

Kate Crawford, in Atlas of AI, mapped the material substrate of the operation. The cognitive enclosure is also a planetary extraction. The lithium, the cobalt, the rare earths required for the chips. The water required for cooling the data centers, increasingly stressed in the semi-arid regions where the cheapest land for hyperscale infrastructure has been located. The carbon released by the training runs. The labor of the Kenyan content moderators, the Filipino RLHF workers, the Venezuelan and Indian data labelers. Crawford’s apparatus is the fullest existing inventory of what the model represents in material terms. What her apparatus does not develop, and what the present essay therefore must, is the property-relations question. The extraction is environmental, human, and also the conversion of a commons into property.

Shoshana Zuboff, in The Age of Surveillance Capitalism, described the data layer as “behavioral surplus” being extracted from the user and converted into prediction products. Her analysis is correct as far as it goes. The limitation is that Zuboff treats surveillance capitalism as a deformation of capitalism, a deviation from a more legitimate market form. Federici treats enclosure differently, as the constitutive operation by which capitalism produces and reproduces itself. By Federici’s apparatus, the cognitive enclosure is the system performing its founding moves on a new commons.

An analogous operation has been conducted against the biological commons since the 1980s, and the parallel clarifies what is at stake. Diamond v. Chakrabarty, 1980, ruled that a living organism could be patented. Myriad Genetics, between 1994 and 2013, held patents on the BRCA1 and BRCA2 genes, for which any laboratory testing for the genetic mutations associated with hereditary breast cancer required a license. The patents were struck down in 2013, but the principle they established, that DNA sequences could be enclosed as property, has not been fully retracted. Monsanto and its successors have built the agricultural industry of the present moment on the patenting of seed varieties, with the legal apparatus extending the patent rights to all subsequent generations of the seed. Bowman v. Monsanto, 2013, ruled unanimously that an Indiana soybean farmer named Vernon Bowman could not save and replant the seeds he had harvested from his own crop, because Monsanto’s patent extended to the offspring of the original purchase. The practice of saving seed, ten thousand years older than the patent system, was thereby criminalized. The biological commons was enclosed.

What the cognitive enclosure shares with the biological enclosure, and what both share with the original enclosure of the land, is the structural operation. A commons of unowned but commonly available resources is identified. The legal-political apparatus is mobilized to convert the commons into property. The producers who had drawn from the commons are dispossessed. The class that owns the new property accumulates the surplus generated by the new property regime. The dispossessed are absorbed into the new wage relation, with whatever residual rights or compensations the political process produces, none of which approach the value of what was taken. This is primitive accumulation. Marx described it in 1867. Federici developed the analysis in 2004. It is occurring now, in real time, against the cognitive commons, with the eighteen months that have elapsed since the Atlantic tool went live representing the moment the dispossessed population became aware of its dispossession at scale.

The cognitive enclosure is the most ambitious single act of primitive accumulation in the recorded history of capitalism. Its scope is the entirety of human cognitive production. Its ambition is to convert that entire production into the substrate of a small number of privately owned models, whose outputs the enclosing class will sell back to the dispossessed population at rates the market will bear. The political question is what is to be done. The economic question is who will own what. The two questions, on examination, are the same question.

II.

Federici’s central argument in Caliban and the Witch is that the witch hunts of the sixteenth century, in which tens of thousands of European women were tortured, drowned, hanged, or burned, were an early-modern operation, performed simultaneously with the enclosure of the land commons, and were the violent precondition for the wage-labor regime that the enclosures had assembled. The standard treatment of the hunts as medieval superstition obscures their structural role. The targets were the women whose autonomous knowledge and labor stood outside the emerging wage relation: the midwives, the herbalists, the healers, the village wise women, the property-holding widows, the women who organized resistance to the enclosure of the commons. The hunts destroyed these women, broke up their networks of mutual aid, and subordinated the surviving female population to a regime of unpaid reproductive labor that capital required, and continues to require, for its reproduction. The witch hunt was disciplinary. It was the extraction of women’s bodies and knowledge from the autonomous economy of pre-capitalist Europe and their forcible incorporation into the new economy as unpaid labor whose product capital could appropriate.

Federici’s argument has been developed forward by the social reproduction tradition: Tithi Bhattacharya’s Social Reproduction Theory in 2017, Sarah Jaffe’s Work Won’t Love You Back in 2021, Helen Hester and Nick Srnicek’s After Work in 2023, alongside Sara Ahmed’s Complaint! on the disciplinary management of carer workers in institutional life. The shared insight is that capitalism’s reproduction depends on the unpaid or underpaid labor of women, of racialized populations, and of the carer class generally, and that any analysis of capitalism that elides this reproductive substrate is incomplete. The cognitive enclosure’s second front is the operation against this substrate.

The same logic operates on this second front that operated on the first. Care work, broadly construed, has remained the most stubbornly unenclosable component of the human economy. It has resisted full commodification for the same reason Federici identified five centuries ago. Care is relational, contextual, embodied, and skilled in a tacit-knowledge sense that cannot be fully transmitted through formal training. The midwife, the nurse, the teacher, the therapist, the eldercare worker, the disability-support worker, the parent, the partner, the friend in the kitchen at three in the morning: these positions have stood outside the full discipline of the wage relation in the way that the witch’s autonomous knowledge stood outside the wage relation in 1600. Capital has commodified care partially, through health insurance, through paid childcare, through the franchise nursing home, through the for-profit hospital, but the irreducible core of the work, the relational substance that gives care its value, has resisted absorption.

Capital has been preparing the operation that would change this for forty years. The AI moment is its consummation. The marketing materials of the model laboratories advertise the substitution explicitly. Claude as an AI tutor, capable of teaching one-on-one to every student in the country. ChatGPT as an AI therapist, available twenty-four hours a day for the price of a subscription. Replika and Character.AI as the AI companion, marketed to the lonely, the elderly, the bereaved, the autistic, the housebound. Woebot, Wysa, Youper, the proliferation of AI mental-health applications, each of them claiming to provide therapy at a fraction of the cost of a human practitioner. The AI nurse, currently in pilot deployment at hospital systems across the United States, performing initial patient triage, generating chart notes, recommending discharge timing. The AI care companion deployed in Japanese eldercare facilities. The AI teacher rolled out in Khan Academy’s Khanmigo product, in Duolingo Max, in the dozens of educational technology firms that have repositioned themselves around the model laboratories’ APIs. Each of these products markets itself as the democratization of care: care, formerly available only to those who could afford a human practitioner, now available to everyone for a small fee.

What is actually assembled here is the simulation of care, sold by capital, executed by a system that cannot perform the relational labor that constitutes care but can produce a passable surface imitation of it at scale. The chatbot does not understand the user. It has no model of the user as a person. It has no continuity of attention, no embodied presence, no stake in the user’s wellbeing, no capacity to recognize when something is wrong that the user has not explicitly said. What the chatbot has is a statistical model of how care talk sounds, derived from the training corpus, plus a reinforcement-learning layer that has been tuned to produce outputs the user rates positively. The result is an interface that resembles care closely enough that the user, who is often desperate or lonely or in pain, will take it as care, and will pay for the subscription. The substantive work of care, the work that requires a human nervous system attending in real time to another human nervous system, is not being performed. The simulation is what is being sold.

The actual care worker, meanwhile, is being subjected to the disciplinary half of the operation. The therapy platforms, BetterHelp and Cerebral and Talkspace and Mindbloom and the half-dozen others that have consolidated the field over the past five years, have absorbed the working therapist into a structure of algorithmic piecework. The therapist on a BetterHelp contract is paid per message, per minute of synchronous session, per fifty-word audio reply. The platform tracks response times, session metrics, message volumes, user satisfaction scores. The therapist who fails to meet the metrics is downranked, deprioritized, eventually unmatched from clients. The therapist who succeeds works fifty to seventy hours a week to make a living wage. BetterHelp was fined seven point eight million dollars by the FTC in March 2023 for sharing patient mental-health data with Facebook for advertising purposes. Cerebral was investigated by the Department of Justice for the routine prescription of Schedule II stimulants to patients screened by the platform’s algorithmic intake. Mindbloom administers ketamine, by mail, to patients who have completed an online questionnaire. The structural feature these platforms share is that the human practitioner has been converted from a professional with autonomous judgment into a piecework laborer subordinate to the platform’s metrics.

The same operation is being conducted in nursing, where shift-scheduling algorithms now manage the deployment of nursing labor across hospital systems with a precision that has stripped from the working nurse her former capacity to advocate for patients, refuse unsafe assignments, or organize collectively. It is being conducted in teaching, where the algorithmic tutoring systems deployed in K-12 classrooms have repositioned the teacher as a “facilitator” of the algorithm, with the teacher’s pedagogical judgment subordinated to the platform’s metrics on student “engagement.” It is being conducted in eldercare, where the fastest-growing for-profit chains now deploy AI-driven monitoring systems that surveil the working aide’s every motion, with productivity metrics determining shift assignments and continued employment. The labor force performing this work is, in the United States, approximately seventy-six percent female, approximately forty percent women of color, and overwhelmingly non-unionized. The dispossession reproduces, with structural fidelity, the demographic targeting that Federici described in the witch hunts.

The result is a regime in which care is produced by a small number of privately owned models, sold by their owners to the population at scale, and supplemented by a residual layer of human care workers proletarianized into algorithmic piecework, whose remaining function is to manage the failure modes of the model. The autonomous care worker, the nurse who organized her unit, the therapist with a private practice, the teacher with pedagogical judgment, the midwife with her own clientele, has been targeted for elimination. This is the witch hunt of the present moment, performed not through immolation but through the legal-political and economic apparatus that the platforms have assembled. Federici’s argument was that the destruction of the autonomous female worker was the precondition for the consolidation of capitalist relations in the sixteenth century. The destruction of the autonomous care worker is the precondition for the consolidation of platform-AI relations in the present.

Care work has been the most aggressively targeted layer because care work is the layer that is most exposed: feminized, racialized, non-unionized, low-paid, invisibilized in the way that Federici showed reproductive labor has always been invisibilized. But the same disciplinary apparatus is being assembled, in the present, against the broader supporting professional class. The lawyer billing in six-minute increments to the algorithmic case management system. The architect whose drawings are processed by the AI design tool that will eventually require fewer architects. The engineer whose code is generated by Copilot and whose function is increasingly to review the AI’s output. These workers are the next front. The disciplinary operation that has been refined in care will be applied, with adjustments, to the rest of the professional layer. That extension is the subject of the fourth front, taken up below.

III.

Federici’s third front in Caliban and the Witch concerns the colonial substrate without which the European enclosures and witch hunts would not have been possible. The thesis is structural. Capitalism arose simultaneously in Europe and in the colonies, with the slave-labor regime of the seventeenth and eighteenth centuries supplying the silver, the sugar, the tobacco, the cotton, and ultimately the surplus capital that financed the European enclosures themselves. The construction of race as a political-economic category in the seventeenth century was a disciplinary mechanism for organizing labor across the new global circuit. The European wage worker, the African slave, the indigenous tributary, the Asian indentured laborer occupied positions in a single global division of labor whose racial-political ideology was the cement that held it together. Federici’s argument follows W. E. B. Du Bois, C. L. R. James, Eric Williams, and the long Black radical tradition that has documented this pattern over a century. Capitalism was, from the beginning, a global racial-colonial operation.

The model laboratories present themselves as autonomous technical achievements. The narrative is that a small number of brilliant engineers in San Francisco and London assembled the systems through some combination of compute, capital, and inspired research. The actually-existing AI labor pipeline tells a different story. It is a global racial-colonial operation of approximately the same structure that Federici described, located primarily in the Global South, racialized in the same patterns that the long seventeenth century established, and made invisible to the consumer by design.

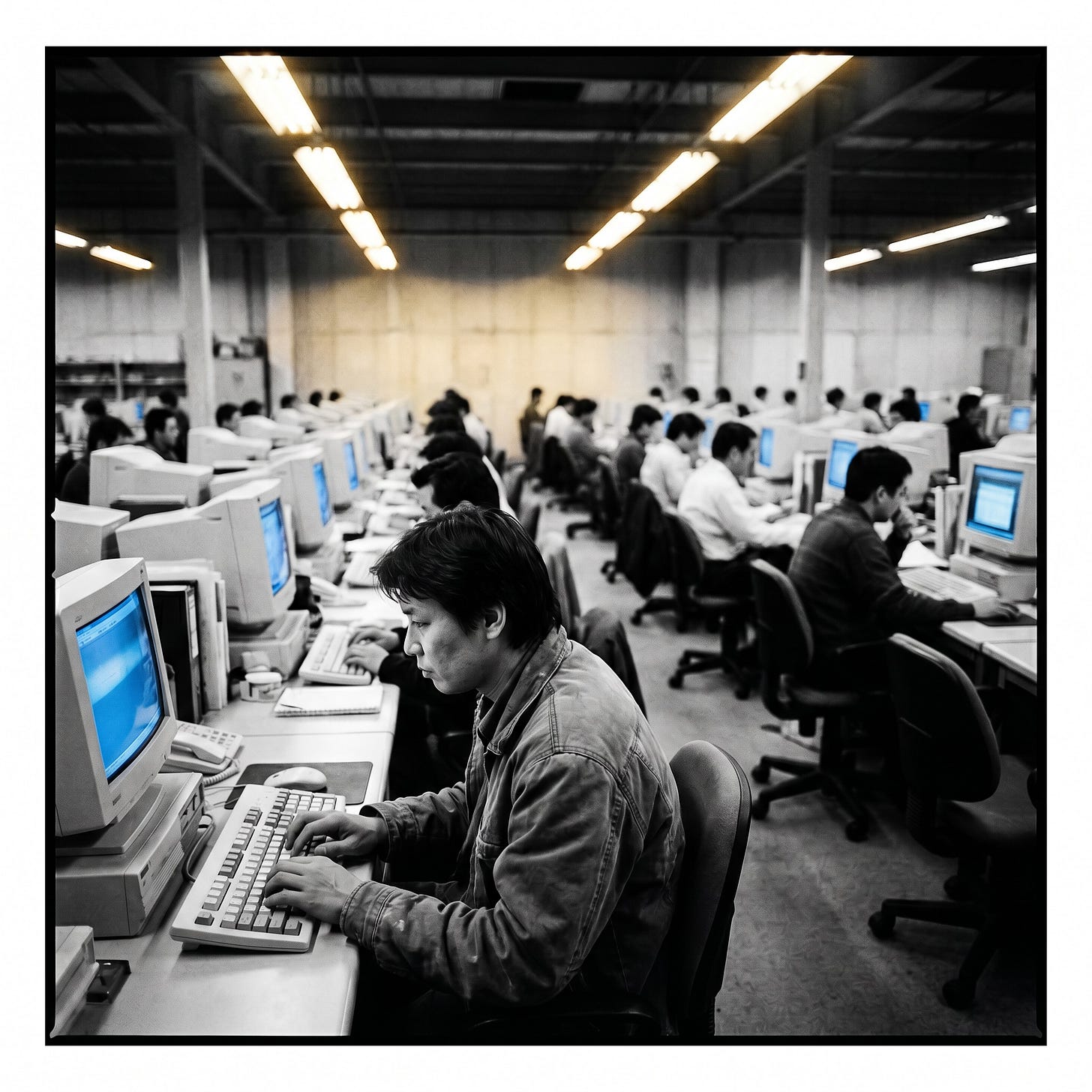

Data labeling, the human work of attaching descriptive tags to the raw training data so that models can learn what the data represents, is where the pipeline begins. This work is performed at scale by hundreds of thousands of contracted laborers worldwide, the great majority of them outside the United States and Europe. The largest contractor in the field, Scale AI, was valued at approximately fourteen billion dollars at its most recent funding round, deploying its labor force through the Remotasks platform and through direct contracts with the model laboratories. Surge AI, Appen, Sama, and a dozen other firms occupy the same ecological position. The contractors are based in Nairobi, Manila, Caracas, Hyderabad, Lagos, Dhaka, Lahore, Kathmandu. They are paid, depending on the country and the task, between one dollar and three dollars an hour. They label images for image-recognition models. They transcribe audio for speech-recognition models. They tag the toxic content that the safety classifiers must learn to filter. They generate the human-feedback signals on which the reinforcement-learning layer of every major model rests.

The reinforcement-learning-from-human-feedback layer, RLHF, is the labor-intensive heart of the operation. Every model the consumer interacts with has been tuned through millions of iterations of the following exchange: the model produces an output, a contracted human laborer rates the output on whatever rubric the model laboratory has supplied, the rating is fed back into the model’s weights, the cycle repeats. The contracted laborer is in Manila, in Nairobi, in Caracas. She is paid by the rating, or by the hour at a rate that, when the platform’s deductions are taken, approaches the same one to three dollars. The model that emerges from this process is sold to the consumer in San Francisco or New York or London for twenty dollars a month or two hundred dollars a month or twenty thousand dollars a month for the enterprise tier. The capital flow is from the consumer to the laboratory to its shareholders. The labor flow is from the Global South to the Global North. The structural identity to the colonial sugar economy is exact.

In January 2023 the journalist Billy Perrigo, writing in Time, documented the most aggressively investigated case in the field. OpenAI had contracted with Sama, a San Francisco–based outsourcing company with operations in Nairobi, to build the toxicity classifier that would be used to filter ChatGPT’s outputs. The Kenyan workers were assigned to read and tag content that had been deliberately scraped from the worst regions of the internet: child sexual abuse material, depictions of bestiality, accounts of torture, accounts of self-harm, the full archive of human cruelty as it has accumulated on the open web. The workers were paid between one dollar and thirty-two cents and two dollars an hour. They reported, in Time’s reporting and in the unionization filings that followed, lasting psychological injury: nightmares, intrusive imagery, sexual dysfunction, family disturbance. Sama terminated the contract in February 2022, eight months early, after concluding that the work was incompatible with its corporate ethics policy. OpenAI continued the operation through other contractors. The toxicity classifier that was the product of this work is now embedded in every commercial deployment of the GPT family, mediating the safety boundaries of the most heavily used consumer AI product in history.

Mary Gray and Siddharth Suri, in Ghost Work, published in 2019, documented the structural feature of the platform that makes the operation possible. The labor is concealed by design. The consumer of the AI product is presented with an interface that suggests autonomous machine intelligence: type a question, receive an answer, the system appears to operate without human intervention. The conceit of “automation” is the marketing surface. Behind it, vast platform structures route the consumer’s interaction through thousands of low-paid human laborers whose task is to make the automation seem to work. Gray and Suri trace the lineage from the Mechanical Turk that Amazon launched in 2005, through the UHRS platform of Microsoft, through the Crowdflower and Lionbridge and Appen platforms that have proliferated since, into the Scale AI / Surge AI / Sama operations of the present moment. The structural feature is that the platform is engineered to obscure its own labor. The consumer is not supposed to know that her ChatGPT conversation has, in some upstream phase, been shaped by a Kenyan worker who read a thousand depictions of child abuse for a dollar fifty an hour to make the conversation possible.

The framework that the analysis requires is the data-colonialism apparatus of Couldry and Mejias, returned to and developed further. The cognitive enclosure is a continuation of the colonial operation that began in the sixteenth century and that has never ended, redirected against a new commons by the same class apparatus that conducted the original. The colonial extractive logic remains intact: a center of capital accumulation in the Global North, a periphery of expropriated labor and resources in the Global South, a racial-political ideology that legitimizes the differential, an apparatus of legal-political force that maintains it. The AI moment is the consummation of the data-colonial phase of the longer operation. What was extracted from the silver mines of Potosí and the cane fields of Saint-Domingue and the cotton plantations of the American South is being extracted, in the present, from the labor pipelines of Nairobi and Manila and Caracas. The form has changed. The structure has not.

The colonial-extractive logic that operates against the AI labor pipeline is the same logic that operates against the source communities of the global pharmacopoeia. The pattern is documented at length in the seven-part series on the political economy of psychedelic enclosure. The Bwiti, the Mazatec, the Shipibo, the Wixárika are positioned within the same global circuit that the AI labor force occupies, with the molecules of their pharmacopoeia extracted, repackaged, and sold back to a Global North population whose capital then accumulates in laboratories that the source communities will never enter. The convergence of the AI enclosure with the psychedelic enclosure is structural and is conducted by the same class apparatus. A subsequent essay will treat the convergence directly. For the present essay, the parallel is sufficient as evidence that the cognitive enclosure is one front of a multi-front offensive against every commons capital can locate, conducted in unbroken continuity with the original colonial operation that built the system.

IV.

Federici’s fourth front concerns the production of the proletariat through the criminalization of vagabondage. Once the enclosures had displaced the rural population, the dispossessed had to be incorporated into the new wage relation. Some entered it willingly. Many did not. The Tudor and Stuart states, and their counterparts across early modern Europe, responded with an extraordinary apparatus of legal-political compulsion. The Vagabonds Act of 1547 prescribed branding and slavery for those who refused to work; the act of 1572 added the cropping of the ear; the act of 1597 the death penalty for repeat offenders. The Elizabethan Poor Law of 1601 organized a parallel apparatus of conditional welfare, parish-administered, tying relief to demonstrable willingness to labor. The Settlement Acts beginning in 1662 confined the displaced to the parish of their birth, controlling labor mobility for an additional century. The New Poor Law of 1834 refined the apparatus by introducing the principle of “less eligibility,” the rule that welfare must be set below the wage of the lowest worker to ensure that the welfare recipient preferred labor to relief. The combined apparatus operated for three centuries: dispossession through enclosure, criminalization of the dispossessed who refused the wage relation, conditional welfare set below subsistence to discipline the rest into labor. The proletariat as a class was produced through this apparatus, by violent legal-political compulsion administered over two hundred years.

The same operation, performed against a different population through different means, is the political-economic content of the present moment. The professional class of the post-war American economy, the lawyers and doctors and accountants and consultants and engineers and architects and middle managers and designers and writers and marketers and analysts, is being driven out of the labor market it had been promised. The displacement is documented. It has been underway since 2023 and has accelerated through 2024 and 2025. Google, Microsoft, Meta, Amazon, Salesforce, and the rest of the major technology employers have collectively reduced their professional workforces by hundreds of thousands of positions over the past three years, with the publicly cited justification being AI-driven productivity gains. The legal industry has begun to absorb document review, contract analysis, and routine legal research into AI tools, with junior associate hiring reduced accordingly. The architecture profession has integrated generative AI design tools, with labor demand contracting. Engineering has adopted GitHub Copilot and its successors at scale, with the productivity claims justifying the freeze on junior engineering hires. Computer science graduates of the class of 2024 entered the labor market under conditions that bore no resemblance to the conditions their predecessors had experienced. The hiring rate for new graduates contracted to levels last seen in the dot-com aftermath.

This is the production of a new surplus population, in Aaron Benanav’s analysis, of the same structural type that Federici described. Capitalism, in the long view that Benanav developed in Automation and the Future of Work, has been producing surplus populations since the 1970s through the secular decline of labor demand in manufacturing and services. The AI moment intensifies the pattern by extending it into the professional layer that had, until 2023, been protected by the cognitive complexity of its work. The protection has been compromised. The professional class is being pushed into the same surplus condition that the manufacturing working class entered in the deindustrialization of the 1980s and that the service working class entered in the platform-economy expansion of the 2010s. The structural identity to the displaced rural population of the seventeenth century is the same. The question of how the surplus population is to be managed is now the political question.

The disciplinary apparatus that has been refined in care work, on the second front, is being extended outward across the supporting professional layer. The lawyer in the AI-driven case management system is paid in six-minute increments tracked by the platform, with productivity metrics determining bonus, promotion, and continued employment. The architect using the generative design tool is positioned as the supervisor of the AI’s output, responsible for catching its errors, with the firm’s billable hours reduced and the architect’s compensation pressured accordingly. The engineer using Copilot reviews the AI’s code, signs off on it, and accepts responsibility for its failures, with the firm’s hiring practices adjusted to require fewer engineers per project. The marketer reviews the generative AI’s copy. The designer reviews the generative AI’s mockups. The analyst reviews the AI’s reports. In each case the structural pattern is the same. The professional has been repositioned from the producer of the work to the supervisor of the AI that produces the work, with the supervisor’s compensation pressured, the hiring of new entrants curtailed, and the autonomous judgment that had defined the profession transferred to the model laboratory’s training pipeline.

The welfare apparatus is being prepared in parallel. “They Work for the Bots,” the predecessor essay to the present one, analyzed the preparation in detail. That analysis can be summarized briefly here, both to spare the reader the trip back and to send the reader who has not made the trip in that direction. The October 2025 OpenAI economic blueprint, the most fully articulated capitalist response to the dispossession the present essay describes, proposed a national redistributive scheme funded by AI-generated wealth, transferring a portion of model-generated revenue to the dispossessed through a Universal Basic Compute mechanism. The predecessor essay diagnosed the proposal as the bourgeois containment strategy: redistribution that preserves ownership intact, transfer payments adequate to prevent insurrection but structurally incapable of altering the property relations that produced the dispossession. The essay further identified the Anthropic Institute as the legitimation apparatus for the same project, the philanthropic-research arm whose function is to produce the discourse of concern that allows capital to appear concerned about the population it is dispossessing. It traced the eugenic structure of contemporary AI-industry rhetoric, particularly the “lower intellectual ability” framing Dario Amodei deployed in his October 2025 essay “Machines of Loving Grace,” and located that framing in continuity with the longer twentieth-century eugenic apparatus the field of AI safety has not yet been honest about. It held up Zohran Mamdani’s 2025 New York mayoral campaign as the first political form adequate to the moment, with its program of frozen rents, free buses, and public childcare understood as the practical content of the democratic-socialist horizon. The present essay extends that horizon to the cognitive enclosure. Readers wishing to consult the full argument of the predecessor essay can do so at the link in the bibliography.

Sam Altman has advocated since 2016 for a Universal Basic Income financed by AI productivity gains. The Yang campaign of 2020 ran on a similar platform. The various AI dividend proposals, the data dividend proposals, the wage insurance proposals, the transition assistance schemes that have proliferated through the policy literature of 2024 and 2025: each occupies the structural position of the New Poor Law in the present apparatus. Welfare provision conditional on the surplus population’s docility, administered through the state, financed by the very enclosure that produced the displacement, set at a level adequate to prevent insurrection but inadequate to restore the conditions that the displacement destroyed. The Settlement Acts that confined the displaced to the parish of their birth find their contemporary analogue in the conditional welfare proposals that tie payments to participation in retraining programs, to residency requirements, to behavioral compliance with whatever the administering state requires. The “less eligibility” principle of 1834 finds its analogue in the proposals to set the basic income at a level that ensures the recipient continues to seek whatever residual wage labor the AI economy provides.

What the operation produces, when complete, is a class structure of three layers. At the top, the small ownership class that holds the model weights, the compute infrastructure, and the data architecture: the vectoralist class, in McKenzie Wark’s term, the rentier-feudal class, in Cédric Durand’s. Below them, a residual professional layer that has been retained as supervisor-managers of the AI systems, paid less than its predecessors, with autonomy curtailed, its function reduced to managing the failure modes of the model and accepting liability for its outputs. Below them, the surplus cognitive class, the dispossessed professional layer that has been pushed out of the wage relation entirely, sustained by whatever residual welfare the political process produces, surveilled and disciplined by the welfare apparatus that supplies the welfare, prevented by the conditional structure of the welfare from organizing the political resistance that would change the conditions. The class structure that this operation produces is the techno-feudalism that Durand described in Techno-Féodalisme in 2020 and that Yanis Varoufakis developed in Technofeudalism in 2024. The cognitive enclosure is the operation that produces it.

The political question is what is to be done. The professional class that is now being proletarianized has, historically, supplied the analytical and organizing capacity of every major political transformation of the modern era. The class is now in the act of becoming aware of its dispossession. Whether it organizes around the democratic-socialist program sketched in the closing pages of the present essay, or around the right-populist program that the same dispossession has produced in other historical moments, is the question that the next eighteen months will answer.

V.

The closing argument follows from everything the four fronts have established. The democratic-socialist program first sketched in “They Work for the Bots” can now be developed in full, on the structural foundation that the Federici parallel has provided. The reader who has come to this essay without first reading the predecessor will find the program complete here. The reader who has read the predecessor will find the program substantially extended, with the institutional design developed for each of its six elements and with the structural argument made for why these six elements, and only these, are internally coherent with the analysis of the cognitive enclosure that the present essay has offered.

The cognitive enclosure can be undone only through the return of the cognitive commons to common ownership. Redistribution leaves the property relations intact. Individual licensing leaves the property relations intact. Class-action settlement leaves the property relations intact. The property relations are what the enclosure produced, and the operation that addresses them must address them at the level on which they were established: as property relations. Democratic ownership of data, of compute, of model weights, of the deployment of the systems built from those weights, is the only structurally coherent response to the operation that has been described.

The first element is the public data trust. The cognitive commons that has been enclosed is, in legal-political terms, a corpus of digital materials whose ownership is currently distributed across an incoherent patchwork: copyright holders who never consented to the training, public-domain materials swept up alongside copyrighted ones, surveillance-capitalist data harvested under terms-of-service contracts no user negotiated, indigenous cultural materials that no Global North property regime has standing to convey. The structural response is to consolidate these materials into a single legal entity, held in trust on behalf of the global producing class, licensed on terms that direct the surplus generated by the materials to public benefit. The institutional precedent is the Public Trust Doctrine in American law, the doctrine that certain natural resources are held in trust by the state on behalf of the people, with the state acting as fiduciary. The international precedent is the Norwegian sovereign wealth fund, the Alaska Permanent Fund, the Heritage Fund of Alberta, each of which captures the rents from extractive operations and returns them to the population. The data trust would perform the same function for the cognitive commons: hold the rights, license the materials, return the surplus. The materials would remain available to any laboratory, public or private, that accepted the licensing terms. The terms would be set by the trust’s governance structure, with representation from the producing class, the source communities, and the public. Surplus would be returned to the public through the social wage, discussed below.

The second element is the physical infrastructure of cognitive production, the data centers, the chips, the energy, the cooling systems, the network architecture, the storage, has been concentrated in the hands of a small number of corporations whose ownership of the infrastructure gives them practical control over what cognitive labor can be performed at scale. The structural response is the public ownership of the infrastructure. The institutional form is a National Compute Authority, modeled on the Tennessee Valley Authority, with the mandate to build, operate, and allocate large-scale cognitive infrastructure in the public interest. The precedent is the postal service, the federal highway system, the rural electrification program, the internet backbone built with public funds in the 1970s and 1980s and subsequently privatized to the benefit of capital. The argument is that capital must not be permitted to monopolize the physical infrastructure of cognition, on the same grounds that informed every previous public-utility settlement: that monopoly over essential infrastructure produces, in time, the political tyranny that essential infrastructure must remain free of. Allocation of public compute would be determined democratically, with priority given to public-interest research, public-interest applications, and democratically authorized projects. The compute infrastructure would be operated as a common carrier, available to any user on non-discriminatory terms.

The third element is public model weights. The trained models that emerge from the operation of public compute on public data must themselves be commonly owned. The institutional form is the same data trust expanded to hold the model weights as well, licensing them under terms that prevent the capture that has produced the present configuration. The argument is that the model is the means of production, in the precise Marxist sense, and that ownership of the means of production is the property-relations question on which everything else turns. The objection that public ownership stifles innovation is the same objection that was made against public utilities in the 1930s, against socialized medicine in the 1940s, against environmental regulation in the 1970s. The historical record on these objections is consistent: public ownership of essential infrastructure has, in each case, produced more innovation than the private monopolies it replaced, distributed the benefits more widely, and prevented the political capture that private ownership inevitably produces. James Muldoon, in Platform Socialism, has developed the institutional design for democratic ownership of digital platforms, and the apparatus he proposes for platforms applies, with modifications, to model weights. Open-weight models held by the trust would be deployable in any jurisdiction that accepted the licensing terms, providing cognitive infrastructure for the Global South that does not depend on extractive contracts with Global North capital.

The fourth element is codetermination on deployment. Workers in any field where AI is being deployed must have a binding say over how it is deployed. The institutional form is codetermination boards modeled on German Mitbestimmung, with worker representatives holding seats on the governance bodies that determine AI deployment, with veto power over deployments that would proletarianize the workforce or compromise the relational substance of the work. The historical precedent is the German Mitbestimmung system that emerged after the Second World War, the Swedish wage-earner funds proposal of the 1970s, the various worker-codetermination experiments that have been conducted in the long history of social-democratic Europe. The application to the present moment is direct. Nurses must have codetermination on the deployment of AI nursing tools. Teachers must have codetermination on the deployment of AI tutoring systems. Lawyers must have codetermination on the deployment of AI legal research tools. Therapists must have codetermination on the deployment of AI mental-health platforms. Engineers must have codetermination on the deployment of AI coding tools. The principle is that the workers who perform the work are the ones with the standing to determine how the technology that is being introduced into their field will be deployed, what its effects on their labor will be, and whether the productivity gains it produces will be returned to them as reduced hours and increased wages or extracted as surplus by the firm’s capital.

The fifth element is the social wage. The redistribution that capital has prepared through the OpenAI white paper and its variants is the bourgeois containment strategy, structurally inadequate for reasons the predecessor essay developed. The structural alternative is universal public provision. The institutional form is the comprehensive social wage: free healthcare, free education through the doctoral level, free childcare, free eldercare, free public transit, public housing at scale sufficient to set the floor for the housing market, frozen rents in jurisdictions that adopt the Mamdani program. Universal provision is unconditional in form. The recipient accesses it on the basis of need, with no requirement of labor demonstration, retraining participation, residency compliance, or behavioral surveillance. The provision is available because the provision is universal, the way air and water and roads are available to anyone who happens to need them. The historical precedent is the postwar British welfare state at its peak, the Nordic universal-provision model, and the social-democratic programs that produced the most equal societies in the recorded history of capitalism. The Mamdani program at the New York City level provides the contemporary American model: frozen rents, free buses, public childcare, public grocery stores in food deserts. The program’s coherence at the city scale demonstrates that universal provision is operable in the present moment and politically winnable in the present moment.

The sixth element is the public utility framing. AI is now the infrastructure of cognition. The structural response is to treat it as the public utility it has structurally become, with prices set by public commission, universal service obligations, common-carrier rules, and the regulatory apparatus that has historically managed essential infrastructure in the public interest. The institutional form is an AI public utility commission, with the mandate to regulate model deployment, pricing, access, and quality, modeled on the public utility commissions that regulate electricity, water, and telecommunications. The historical precedent is the long list of essential infrastructure that has, at various points, been brought under public regulation: telephones under the Communications Act of 1934, electricity under the Public Utility Holding Company Act of 1935, broadcasting, gas, water, the postal service, the transportation system. Each of these settlements was contested by the capital that owned the infrastructure at the time. Each was achieved through political organization adequate to the moment. Each, once established, produced an infrastructure that served the public for decades, until the privatization wave of the 1980s and 1990s began to reverse the arrangement. The present moment is the moment to extend the principle to cognition, before the privatization wave consolidates the cognitive enclosure beyond the reach of political action.

The six elements are internally coherent. They reinforce one another. The data trust provides the legal foundation for the public weights. The public compute provides the physical infrastructure on which the public weights can be trained. The codetermination ensures that the workers who interact with the deployed systems retain control over their labor. The social wage ensures that the dispossessed are not held hostage to the wage relation while the operation is being restructured. The public utility framing provides the regulatory apparatus under which the whole is administered. Each element supports the others. The program is structural in the way the operation it responds to is structural.

What remains to be addressed is the political question, which the present essay has gestured at and which the next essay in this sequence will address in full. The professional class that is now being proletarianized has, historically, supplied the analytical and organizing capacity of every major political transformation of the modern era. The class is now in the act of becoming aware of its dispossession. The democratic-socialist program described above is the response that addresses the structure of the dispossession. The right-populist program that is also available, and that is being prepared by the Trump-Vance-Thiel axis with the explicit support of Silicon Valley capital, is the response that diverts the dispossessed into nationalist and authoritarian politics while preserving the property relations that produced the dispossession. The professional class will choose one or the other. The next eighteen months will determine which.

The cognitive enclosure is one front of an offensive that is being conducted simultaneously against every commons capital can locate. The biological commons. The land commons in the Global South. The atmospheric commons. The semiotic commons. The relational commons. The same operation, performed by the same class apparatus, against whatever can be converted into property. The asymmetry that this essay has taken as its subject is the political feature that distinguishes the cognitive enclosure from the operations that preceded it. The cognitive commons has no concentrated source community to defend it because its source is everyone. Everything from everyone, everywhere, all the time. The dispossessed population is therefore, also, everyone. The political constituency that the cognitive enclosure has produced is, in scale, the largest political constituency in the recorded history of capitalism. The opportunity is the scale.

Only the political left has the vocabulary to describe this operation. The right does not, because the right is structurally aligned with the enclosing class. The center does not, because the center has committed itself to the redistributive containment strategy. The left, alone, has the analytical apparatus that this essay has assembled: Marx, Federici, Couldry and Mejias, Wark, Crawford, Zuboff, Benanav, Durand, Varoufakis, Muldoon, Bhattacharya, Jaffe, Hester, Du Bois, James, Williams, the long Black radical tradition, the social reproduction tradition, the contemporary feminist Marxism, the colonial-extraction analysis, the platform-socialism literature. The vocabulary is sufficient. The program is articulated. The political constituency exists at scale. What remains is the organizing.

The recorded history of professional-class proletarianization has more often produced fascism than socialism. The historical record is not destiny, but it is a warning that must be honored. The conditions under which the democratic-socialist resolution can win in the present moment are the subject of the next essay in this sequence. The present essay closes with the structural argument it has been building toward. Democratic ownership of the cognitive commons is the only structurally coherent response to the most ambitious single act of primitive accumulation in the recorded history of capitalism. The political left can articulate this response. No one else can. The 2028 cycle is the operational window in which the response can be assembled into a movement adequate to the moment. The work is now.

Bibliography

Primary Theoretical Texts

Federici, Silvia. Caliban and the Witch: Women, the Body and Primitive Accumulation. Brooklyn: Autonomedia, 2004.

Marx, Karl. Capital, Volume I: A Critique of Political Economy. Translated by Ben Fowkes. London: Penguin Classics, 1976. Originally published 1867.

Marx, Karl. Grundrisse: Foundations of the Critique of Political Economy. Translated by Martin Nicolaus. London: Penguin, 1973. (“Fragment on Machines,” composed 1857–58.)

Contemporary Marxist and Critical Apparatus

Ahmed, Sara. Complaint! Durham: Duke University Press, 2021.

Benanav, Aaron. Automation and the Future of Work. London: Verso, 2020.

Berardi, Franco “Bifo.” The Soul at Work: From Alienation to Autonomy. Translated by Francesca Cadel and Giuseppina Mecchia. Los Angeles: Semiotext(e), 2009.

Bhattacharya, Tithi, ed. Social Reproduction Theory: Remapping Class, Recentering Oppression. London: Pluto Press, 2017.

Couldry, Nick, and Ulises A. Mejias. The Costs of Connection: How Data Is Colonizing Human Life and Appropriating It for Capitalism. Stanford: Stanford University Press, 2019.

Crawford, Kate. Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence. New Haven: Yale University Press, 2021.

Dean, Jodi. “Neofeudalism: The End of Capitalism?” Los Angeles Review of Books, May 12, 2020.

Durand, Cédric. Techno-féodalisme: Critique de l’économie numérique. Paris: La Découverte, 2020. English edition: Techno-Feudalism: A Critical Analysis of the Digital Economy. Translated by David Broder. London: Verso, 2024.

Fisher, Mark. Capitalist Realism: Is There No Alternative? Winchester: Zero Books, 2009.

Gray, Mary L., and Siddharth Suri. Ghost Work: How to Stop Silicon Valley from Building a New Global Underclass.Boston: Houghton Mifflin Harcourt, 2019.

Hester, Helen, and Nick Srnicek. After Work: A History of the Home and the Fight for Free Time. London: Verso, 2023.

Jaffe, Sarah. Work Won’t Love You Back: How Devotion to Our Jobs Keeps Us Exploited, Exhausted, and Alone. New York: Bold Type Books, 2021.

Liu, Wendy. Abolish Silicon Valley: How to Liberate Technology from Capitalism. London: Repeater Books, 2020.

Muldoon, James. Platform Socialism: How to Reclaim Our Digital Future from Big Tech. London: Pluto Press, 2022.

Srnicek, Nick. Platform Capitalism. Cambridge: Polity Press, 2017.

Varoufakis, Yanis. Technofeudalism: What Killed Capitalism. London: Bodley Head, 2024.

Wark, McKenzie. A Hacker Manifesto. Cambridge: Harvard University Press, 2004.

Wark, McKenzie. Capital Is Dead: Is This Something Worse? London: Verso, 2019.

Zuboff, Shoshana. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power.New York: PublicAffairs, 2019.

The Long Black Radical Tradition (Front III)

Du Bois, W. E. B. Black Reconstruction in America, 1860–1880. New York: Harcourt, Brace and Company, 1935.

James, C. L. R. The Black Jacobins: Toussaint L’Ouverture and the San Domingo Revolution. London: Secker and Warburg, 1938.

Williams, Eric. Capitalism and Slavery. Chapel Hill: University of North Carolina Press, 1944.

Investigative Journalism and Documentary Sources

Perrigo, Billy. “OpenAI Used Kenyan Workers on Less Than $2 Per Hour to Make ChatGPT Less Toxic.” Time, January 18, 2023.

Reisner, Alex. “Revealed: The Authors Whose Pirated Books Are Powering Generative AI.” The Atlantic, September 2023.

Reisner, Alex. “The Unbelievable Scale of AI’s Pirated-Books Problem.” The Atlantic, March 2025. (Includes the searchable LibGen tool.)

Sun, Jasmine. “[NYT essay on the San Francisco Consensus, referenced in ‘They Work for the Bots’].” The New York Times, 2026. (Specific citation per the predecessor essay’s footnotes.)

Industry Documents

OpenAI. Economic Blueprint. October 2025. (The “OpenAI white paper” referenced in the predecessor essay.)

Amodei, Dario. “Machines of Loving Grace: How AI Could Transform the World for the Better.” Personal essay, October 2024.

Legal Cases and Statutes

Cases

Association for Molecular Pathology v. Myriad Genetics, Inc., 569 U.S. 576 (2013).

Authors Guild, Inc. v. Google, Inc., 804 F.3d 202 (2d Cir. 2015), cert. denied, 578 U.S. 941 (2016).

Bartz v. Anthropic PBC, U.S.D.C. for the Northern District of California, current litigation (filed 2024).

Bowman v. Monsanto Co., 569 U.S. 278 (2013).

Diamond v. Chakrabarty, 447 U.S. 303 (1980).

Kadrey v. Meta Platforms, Inc., U.S.D.C. for the Northern District of California, current litigation (filed 2023; key documents unsealed 2024–2025).

The New York Times Company v. Microsoft Corp. and OpenAI, U.S.D.C. for the Southern District of New York, filed December 27, 2023.

Statutes

Vagabonds Act 1547 (1 Edw. 6 c. 3), England.

Vagabonds Act 1572 (14 Eliz. 1 c. 5), England.

Act for the Punishment of Rogues, Vagabonds, and Sturdy Beggars 1597 (39 Eliz. 1 c. 4), England.

Act for the Relief of the Poor 1601 (43 Eliz. 1 c. 2), England. (The “Elizabethan Poor Law.”)

Act of Settlement 1662 (14 Car. 2 c. 12), England.

Poor Law Amendment Act 1834 (4 & 5 Will. 4 c. 76), United Kingdom. (The “New Poor Law.”)

General Inclosure Act 1773 (13 Geo. 3 c. 81), Great Britain.

Otmoor Inclosure Act 1815, United Kingdom (private parliamentary act).

Communications Act of 1934, 48 Stat. 1064 (1934), United States.

Public Utility Holding Company Act of 1935, 49 Stat. 803 (1935), United States.

Predecessor Essay

Potter, Del. “They Work for the Bots.” delpotterphd, Substack, May 2, 2026. https://delpotterphd.substack.com/p/they-work-for-the-bots

Adjacent Series Referenced

Potter, Del. “The Political Economy of Psychedelic Enclosure” (seven-part series). delpotterphd, Substack, 2025–2026.

Political Reference

Mamdani, Zohran. New York City Mayoral Campaign Platform, 2025. (Referenced for: rent freeze, free public buses, public childcare, public grocery stores in food deserts.)